I have long been fascinated by copyright law. It's one of those arcane, behind-the-scenes forces that govern so much of our world and slip by our notice most of the time. For me, it all started with the brilliant Mike Rugnetta's "Future of Fandoms" video on PBS Ideas Channel.

The video introduced me to the Organization for Transformative Works. As they say of themselves:

The Organization for Transformative Works (OTW) is a nonprofit organization established by fans to serve the interests of fans by providing access to and preserving the history of fanworks and fan culture in its myriad forms. We believe that fanworks are transformative and that transformative works are legitimate.

Our projects include Archive of Our Own, Legal Advocacy, Fanlore, Transformative Works and Cultures and Open Doors.

We envision a future in which all fannish works are recognized as legal and transformative and are accepted as a legitimate creative activity. We are proactive and innovative in protecting and defending our work from commercial exploitation and legal challenge. We preserve our fannish economy, values, and creative expression by protecting and nurturing our fellow fans, our work, our commentary, our history, and our identity while providing the broadest possible access to fannish activity for all fans.

Okay, so, it's an organization that runs a website that has a bunch of (often questionable) fanfiction on it?

VoxConstance Grady

VoxConstance Grady

Yes, and it's one of many organizations that have grown up on the internet fighting for fairer copyright law. Copyright law is about as complex and difficult to understand as subjects like this come. It sits at the fascinating intersection of legal traditions, cultural understandings, economic interests, and public perceptions. But in that complexity is a great deal that's worth focusing on.

The United States, with its incredible cultural heft globally, has been a site of intense copyright law fights for well over half a century. At the epicenter of these debates has long been The Mouse. The last time copyright law came into the public discourse in a big way was in 1998 when US Congress passed the Copyright Term Extension Act, or, as it was derisively known, "the Micky Mouse Protection Act."

Treatises have been written on the legalities of how and why that law was passed, but its immediate material impact was to shift the timelines of how copyright expired. As Wikipedia outlines it:

Following the Copyright Act of 1976, copyright would last for the life of the author plus 50 years (or the last surviving author), or 75 years from publication or 100 years from creation, whichever is shorter for a work of corporate authorship (works made for hire) and anonymous and pseudonymous works. The 1976 Act also increased the renewal term for works copyrighted before 1978 that had not already entered the public domain from 28 years to 47 years, giving a total term of 75 years.

The 1998 Act extended these terms to life of the author plus 70 years and for works of corporate authorship to 120 years after creation or 95 years after publication, whichever end is earlier. For works published before January 1, 1978, the 1998 act extended the renewal term from 47 years to 67 years, granting a total of 95 years.

Fast-forward to today and what has changed?

Well, large language models (LLMs), or, as you might know them better - AI.

In a brilliant piece for The Verge, Wes Davis writes about how Sarah Silverman and others are suing OpenAI and Meta (two of the most notable LLM creators) for copyright infringement.

The Verge

The Verge

Before getting into the case, it's important to quickly say two things:

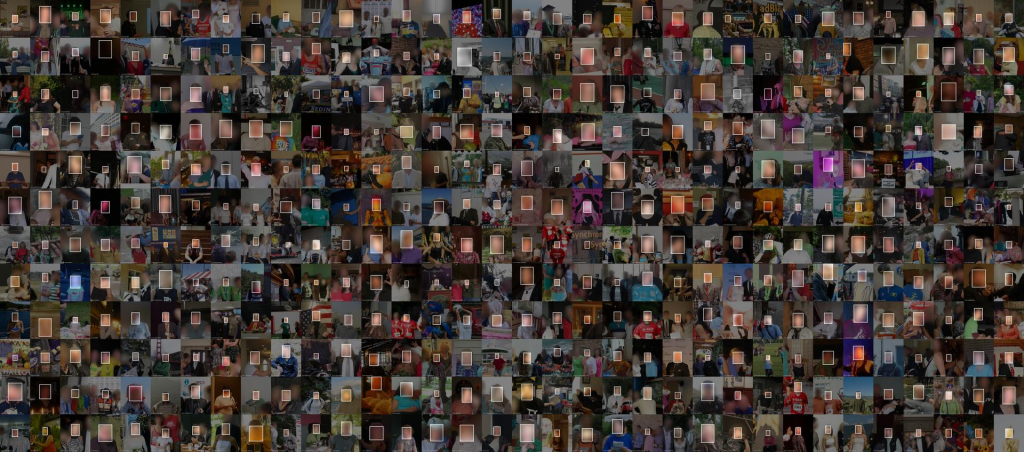

- LLMs like ChatGPT rely on unfathomably large amounts of what is called "unlabelled text" (i.e., huge reams of data that are too massive for humans to categorize on their own) that an artificial neural network reviews in a variety of ways to create distributions of words that have been probabilistically determined to be suitable to a given prompt.

- These massive amounts of text (the "large" part of a language model) are really one of the lynchpins of what makes programs like ChatGPT so interesting and exciting, creating its semblance of "natural language," that can make them feel so lifelike. But in downloading what amounts to part of the internet into these models, the possibility of including things you're not supposed to (like copyrighted materials) grows almost exponentially.

With that in mind, here's what the plaintiffs are saying:

In the OpenAI suit, [Sarah Silverman, as well as authors Christopher Golden and Richard Kadrey] offers exhibits showing that when prompted, ChatGPT will summarize their books, infringing on their copyrights. Silverman’s Bedwetter is the first book shown being summarized by ChatGPT in the exhibits, while Golden’s book Ararat is also used as an example, as is Kadrey’s book Sandman Slim. The claim says the chatbot never bothered to “reproduce any of the copyright management information Plaintiffs included with their published works.”

I'm obviously not a copyright lawyer, but I can imagine that in a more straightforward case of someone photocopying pages from Silverman's book and distributing them en mass to millions of people, this would likely be pretty much open-shut. But here's where it gets more interesting, and where Meta and ChatGPT are not (necessarily) immediately the bad guys:

They relied on the use of huge libraries of data. In Meta's description of their LLaMA, they point to a variety of sources that they used to train their programs on, including datasets from ThePile. As Wade Davis outlines, ThePile was itself created by another company, EleutherAI, which draws on a different library, the Bibliotik private tracker. As the filings for the class action lawsuit say:

As noted in Paragraph 31, supra, the OpenAI Books2 dataset can be estimated to contain about 294,000 titles. The only “internet-based books corpora” that have ever offered that much material are notorious “shadow library” websites like Library Genesis (aka LibGen), Z-Library (aka Bok), Sci-Hub, and Bibliotik. The books aggregated by these websites have also been available in bulk via torrent systems. These flagrantly illegal shadow libraries have long been of interest to the AI-training community: for instance, an AI training dataset published in December 2020 by EleutherAI called “Books3” includes a recreation of the Bibliotik collection and contains nearly 200,000 books. On information and belief, the OpenAI Books2 dataset includes books copied from these “shadow libraries”, because those are the most sources of trainable books most similar in nature and size to OpenAI’s description of Books2.

The Claims for Relief in their complaint state (among many things) that:

Plaintiffs never authorized OpenAI to make copies of their books, make derivative works, publicly display copies (or derivative works), or distribute copies (or derivative works). All those rights belong exclusively to Plaintiffs under copyright law. [emphasis added]

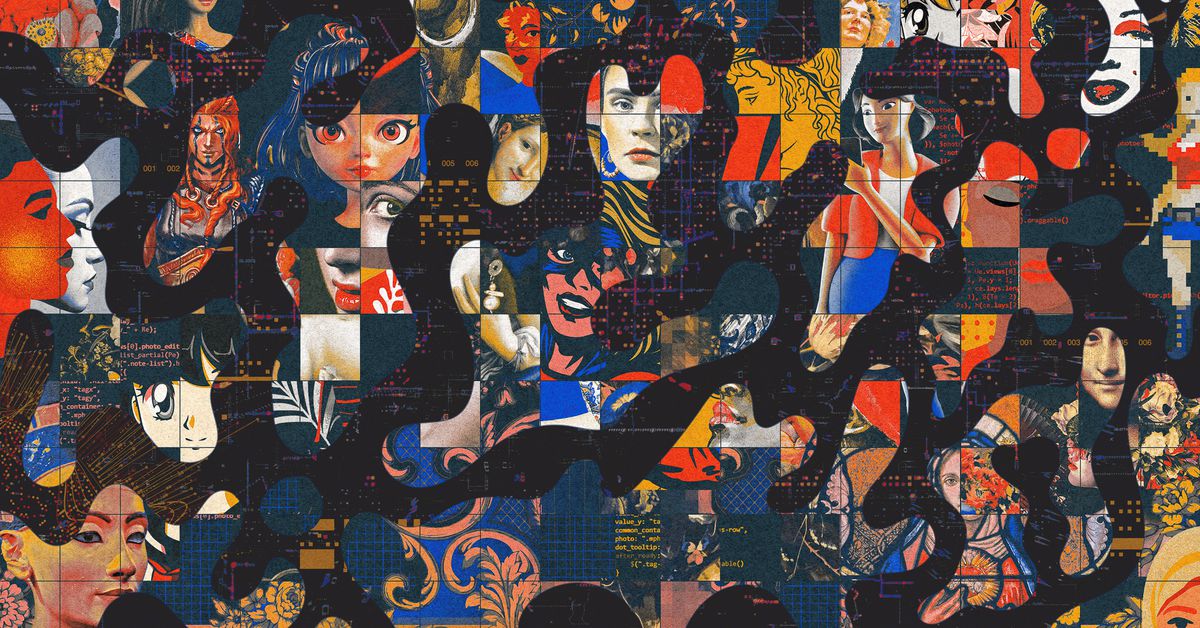

And this is only specific to books. There are other lawsuits and questions being raised in art, as the styles of artists are being copied by programs like Stable Diffusion - sometimes with almost direct replicas of existing, copyrighted works.

Again from The Verge, James Vincent goes further into this exploration to understand what the implications are across the wider breadth terrain of copyright law and its interactions with AI.

The Verge

The Verge

The entire article is fascinating, but I zeroed in on one element: whether or not AI can and should be allowed to use copyrighted material as part of its learning processes. As Vincent writes:

The justification used by AI researchers, startups, and multibillion-dollar tech companies alike is that using these images is covered (in the US, at least) by fair use doctrine, which aims to encourage the use of copyright-protected work to promote freedom of expression.

This is what brings us back to fandoms and copyright law: fair use. The Organization for Transformative Works (OTW) has long argued that artists and creators have the right to use materials without paying or permission if that use is "fair." (In Canada, we call this "fair dealing.") Importantly, as OTW has long reminded everyone: Courts in the U.S. have held that fair use is “not merely excused by the law, it is wholly authorized by the law.” The US Court system has established "four factors" which determine of the use of a work is fair or not, and they include:

- the purpose and character of the use, including whether such use is of a commercial nature or is for nonprofit educational purposes;

- the nature of the copyrighted work;

- the amount and substantiality of the portion used in relation to the copyrighted work as a whole; and

- the effect of the use upon the potential market for or value of the copyrighted work.

Creative Commons, an organization I have a lot of respect for in their work to ensure fairness and balance in how copyright law is used, has engaged with this question head-on with the interests of the original creators and "transformers" in mind. Stephen Wolfson there quotes both academics and US case law which largely hold that AI models that use copyrighted works as data to learn on are likely within the bounds of fair use since their intent is not to make money on the individual use of those works - rather, it is part of a vast set of information that is being developed to serve a different purpose from a specific instance of transformation.

I think the most interesting part of the argument relates to the fourth principle of fair use. Wolfson notes how challenging this element will be to navigate and he, like myself, seems to express genuine concern for creators who worry about being edged out of the market:

[W]hether Stable Diffusion and Midjourney harm the market for the works in their training data is difficult, in part because the way that courts think of this question can be a bit inconsistent. In one way, the answer must be yes, this use at least has the potential to harm the market for the original. That is, after all, one likely reason the plaintiffs filed this lawsuit in the first place — they are afraid that AI generated content will cut into their ability to profit off of their art. Indeed, any art has the potential of competing with other art, not necessarily because it fills the same niche, but because attention is limited, and AI generated content has the advantage of being able to be made in a quick, automated fashion.

As he writes next, though, and which I did find striking, the argument around market harm may take a different dimension in a context where one might imagine that material produced by an AI is used by someone who would never have purchased original works in the first place.

However, this may not be the best way to think about market harm in the context of using images as training data. As mentioned above, we need to think about this question in the context of the transformative purpose. In Campbell v. Acuff Rose, the Supreme Court wrote that the more transformative the purpose, the less likely it is that it will be a market substitute for the original. Given this, perhaps it is better to ask whether this use as training data, not as pieces of art, harms the market for the original. This use by Stability AI and Midjourney exists in an entirely different market from the original works. It does not usurp the market of the original and it does not operate as a market substitute because the original works were not in the data market. Moreover, this use as training data does not “supersede the objects” of the originals and does not compete in the aesthetic market with the originals.

Wolfson, speaking for himself, says that training for AI models should absolutely fall under fair use, and therefore Silverman's and many others' lawsuits would fail.

But it's not clear that everyone in the Creative Commons world and others would see this being true for works generated by LLMs or other AI. Returning to James Vincent at The Verge:

Consider the same text-to-image AI model deployed in different scenarios. If the model is trained on many millions of images and used to generate novel pictures, it’s extremely unlikely that this constitutes copyright infringement. The training data has been transformed in the process, and the output does not threaten the market for the original art. But, if you fine-tune that model on 100 pictures by a specific artist and generate pictures that match their style, an unhappy artist would have a much stronger case against you.

“If you give an AI 10 Stephen King novels and say, ‘Produce a Stephen King novel,’ then you’re directly competing with Stephen King. Would that be fair use? Probably not,” says [Vanderbilt law professor Daniel] Gervais.

For me, personally, the case for using copyrighted material for training is pretty clear. We increase our understanding of these processes as these models use uncountably large parts of human language as the terrain on which they build their mental muscles. But it's very clear that many of these companies are being quite creative to get around copyright law during the training process (a practice Andy Baio has dubbed "data laundering"), to then build AI with clear commercial purposes.

Waxy.orgAndy Baio

Waxy.orgAndy Baio

Vincent closes his article by asking the crucial question of whether or not there's a way forward here. He notes that there are some opportunities out there and lessons from places like the music industry, but he seems to conclude that the damage may already have been done.

On a personal level, I'm profoundly conflicted. I've spent my entire young life supporting and believing deeply in the need to maintain a genuine creative commons, protect fair use, and ensure that major corporations are not gatekeeping cultural and intellectual products that can and should be to the benefit of all humanity. And yet... Baio's article and these court filings make quite a compelling case that many companies are leveraging long-held principles of internet freedom to wash their hands ethically of the fact that they stand to benefit immeasurably from the technology they are developing while doing it on the backs of mostly underappreciated and underpaid creators.

I don't have a great answer to this, and I think the legalities are so complex that there's likely no elegant solution from a legal perspective here. The closest solution by analogy I can come up with is that of a "Superfund," which is a legal and financial mechanism used in the US to help clean up hazardous sites after environmental contamination. Formally known as the Comprehensive Environmental Response, Compensation, and Liability Act (CERCLA), Superfund is run by the Environmental Protection Agency (EPA) and allows the EPA to clean up contaminated sites as well as force the parties responsible for the contamination to either perform cleanups or reimburse the government for EPA-led cleanup work. Its goals are to:

- Protect human health and the environment by cleaning up contaminated sites;

- Make responsible parties pay for cleanup work;

- Involve communities in the Superfund process; and

- Return Superfund sites to productive use.

Part of what makes this analogous to me is the fact that we're talking about a commons - in this case, not an environmental one, like a lake, but a digital one.

WIREDEli Pariser

WIREDEli Pariser

The original Superfund was a massive trust, financed by a tax on petroleum and chemical manufacturing companies. Superfund projects are now in deep fiscal danger due to underfunding due to limited general revenue, but the principles remain the same.

As we enter into the next phase of our ongoing digital gold rush, it's interesting to imagine a framework that ensures that the incoming benefits of these technologies are equitably shared with those whose work made them possible in the first place.

(As an aside, it was interesting to write all of this, conscious of the fact I am drawing on journalists like Davis and Vincent heavily, even while what I am writing would definitively fall under the category of fair use - their work was formative for what I wrote here, and I hope my liberal mentions of them speak to my respect and admiration for their work).

Sign up for George Patrick Richard Benson

Strategist, writer, and researcher.

No spam. Unsubscribe anytime.